Journal of Plant Science and Research Impact Factor 2025-2026

Journal of Plant Science and Research Impact Factor Year Wise

Note : This Journal information is taken from the Citation Reports™ (Clarivate).

Note : This Journal information is taken from the Citation Reports™ (Clarivate).

Journal of Plant Science and Research Details

| Journal Name | Journal of Plant Science and Research |

| Journal Abbreviation | Journal of Plant Science and Research Abbreviation |

| Journal Online | 2349-2805 (23492805) |

| Impact Factor | Journal of Plant Science and Research Impact Factor |

| CiteScore | Journal of Plant Science and Research CiteScore |

| Acceptance Rate | Journal of Plant Science and Research Acceptance Rate |

| SCImago Journal Rank | Journal of Plant Science and Research SJR (SCImago Journal Rank) |

Evaluating scientific quality is a notoriously difficult problem that has no standard solution. Ideally, published scientific results should be inspected by real experts in the field and should be given scores for quality and quantity according to the rules established.

But it is now thrust upon committees and institutes which are trying to find alternatives to evaluate research using metrics like IF. The quality of scientific knowledge a journal contains and prestige of a journal is calculated using certain metrics and one such metric is Impact Factor. Impact Factor (IF) or journal impact factor (JIF) is a measure of the number of times an average paper in a journal is cited, during a year. This number is released by Clarivate Analytics. They have complete control over this. Only the journals that are listed with Science Citation Index Expanded (SCIE) and Social Sciences Citation Index (SSCI) are eligible to get an Impact factor score.

The impact factor score is revealed by Clarivate analytics in their annually published Web of Science Journal Citation Report (JCR). The impact factor is a very important tool used by journals to show their value. It is usually used as a way to measure the relative importance of a journal within its field; journals that have higher impact factor values are considered to be more important or higher status. They are considered to be having more prestige in their respective fields, than those with lower values. It is used to ascertain the importance or rank of a journal by counting the times its articles were cited. In addition to the 2-year Impact Factor, the 3-year Impact Factor, 4-year Impact Factor, 5-year Impact Factor, Real-Time Impact Factor can also provide further insights into the impact of Journal of Plant Science and Research.

The impact factor is pretty beneficial in explaining the importance of total citation frequencies. It also eliminates some of the bias of such counts which are in the favor of large journals over small ones, journals that are frequently issued over ones that are less frequently issued, and older journals over newer ones. Usually, in the latter case, older journals have a larger citable body of literature than smaller or younger journals due to the time they’ve spent in existence and thus have attained many texts of knowledge. All things being considered, the larger the number of previously published articles in a journal, the more frequently that particular journal will be cited.

The impact factor was coined by Eugene Garfield, who founded the Institute for Scientific Information (ISI). Impact factors have been calculated yearly starting from 1975 for journals that are listed in the Journal Citation Reports (JCR). ISI was acquired by Thomson Scientific & Healthcare in 1992 and was renamed Thomson ISI. In 2018, Thomson ISI was bought up by Onex Corporation and Baring Private Equity Asia. Both of them founded a new corporation, Clarivate Analytics, which publishes JCR.

How Impact Factor is Calculated?

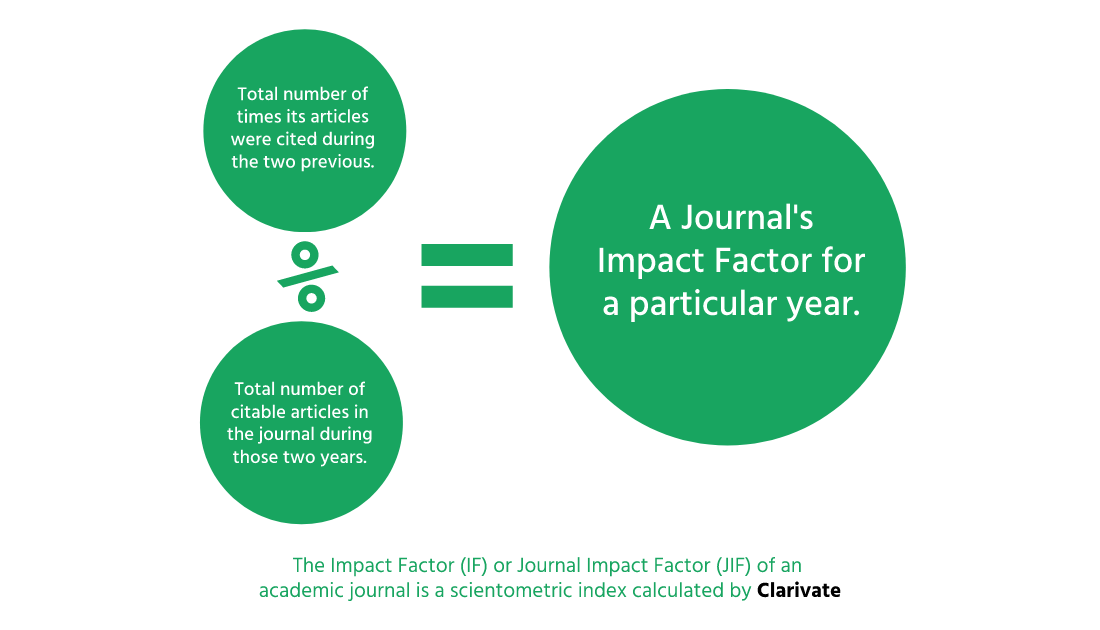

The JCR provides quantitative tools for ranking, evaluating, categorizing, and comparing journals that are indexed on the two indexes mentioned above. The impact factor is one of these tools used to rank and evaluate journals. It is the frequency with which an “average article” in a journal has been cited in a particular year or period. The annually released JCR impact factor is a comprehensive numerical digit that represents the ratio among citations and recent citable items published during a particular period of time. Thus, the impact factor of a journal is evaluated by dividing the number of current year citations by the items published in that journal during the previous two years, thus connecting them. We know that the impact factor is a way to quantify the prestige of journals but in order to quantify it, we need to know how to calculate it.

The Journal of Plant Science and Research IF measures the mean of citations obtained in a particular year 2024 by papers issued in the Journal of Plant Science and Research during the two preceding years 2024

The calculation of the impact factor is seemingly easy. The entire calculation of the impact factor is based on a two-year period and consists of dividing the number of times that articles were cited by the number of articles that are citable. The method to calculate the impact factor of Journal of Plant Science and Research is as follows.

A = citations in published articles in 2023 and 2024 by indexed journals during 2025.

B = the total number of citations published during 2023 and 2024.

A/B = 2024 impact factor

Some of the journals listed in the JCR aren’t citing journals, but are journals that are specifically for citations only. This is noteworthy when comparing journals by impact factor only because the self-citations from a cited-only journal are not included in the calculation procedure of its impact factor. Self-citations usually represent about 13% of the citations received by a journal.

A = citations in 2025 to articles published in 2023 and 2024

B = self-citations in 2025 to articles published in 2024 and 2023

C = A-B = total citations subtracted by self-citations

D = number of articles published in 2023-2024

E = C/D (revised impact factor)

Use of Impact Factor

Informed and careful use of the data of impact factor is crucial. Uninformed users might be tempted to jump to ill-formed conclusions based on impact factor statistics unless they’re properly made aware of several caveats involved. Impact factor has many uses some of them are prominent but they can be misused too. Since journal impact factors are easily available, it is enticing to use them to gauge the capabilities of individual scientists or research groups. If we assume that the journal is illustrative of its articles, the journal impact factors of an author’s articles can be used as a representative to get an objective understanding and quantitative measure of the author’s scientific achievements. This is much more effective for discerning a particular scientist’s or research group’s reputation than applying the traditional methods like peer-review.

Given how much the use of journal impact factors has been increasing as well as how explicitly journal prestige is being used in research evaluation, it warrants a critical examination of this indicator. The impact factor of Journal of Plant Science and Research is a very big deal for it. We will discuss a few factors below.

Issues and limitations of Impact Factor

Decisions based on journal impact factors are potentially misleading where the uncertainty associated with the measure is ignored. Caution should be employed while interpreting journal impact factors and their ranks, and a measure of uncertainty should always be presented along with the point estimate. Journals should follow their guidelines for presenting data by including a measure of uncertainty when quoting performance indicators such as the journal impact factor. The impact factor should be used while being vigilant to the many circumstances that affect citation rates, for example, the average number of citations in an average article. The impact factor must be used with primed peer review. In the case of an academic evaluation for tenure, it is sometimes not considered appropriate to use the impact of the journal to calculate the expected frequency of a recently published article. Citation frequencies for individual articles are quite diverse.

Issues related to using impact factors

- Journal impact factors do not statistically illustrate individual journal articles

- Journal impact factors correspond terribly with real citations of individual articles

- Authors use plenty of norms other than impact when they’re submitting to journals

- Citations to “non-citable” items are sometimes incorrectly included in the database

- If Self-citations exist, they are not corrected

- Review articles are cited heavily and exaggerate the impact factor of journals

- Lengthy articles have many citations and get high impact factors

- Short publication time gap allows many short term journal self-citations and gives a fake high journal impact factor

- Journal’s authors usually prefer citations in the national language of the journal

- Selective journal self-citation

- The sweep of the database is incomplete

- The database doesn’t include books as a citation source

- There is an English language bias with the database

- American publications dominate the database

- Journal in a database may vary

- The impact factor is a function of the number of references per article in the research field

- Research fields in which the content becomes swiftly obsolete are endorsed

- The impact factor heavily depends on the extension or diminution of the field of research

- High impact journals are tough to find in small research fields

- The journal impact factor is strongly determined by relations between fields (clinical v basic research, for example)

- The citation rate of the article determines journal impact, but not vice versa which should be the case in the first place

Journal impact factors are indicative only when the evaluated research is absolutely standard concerning the journals used, an assumption that really makes any evaluation a bit excessive. In actual practice, however, even samples that are as huge as a nation’s scientific output are not at all random and indicative of the journals, that they have been published in. In many research fields such as mathematics, a major part of the intellectual output is usually published in the form of books, which are not considered a part of the database; therefore, they have no impact factor. This thus makes JCR redundant.

The IF of a journal such as Journal of Plant Science and Research is not associated with factors like quality of the peer-review process and quality of the content of the journal but is rather a measure that reflects the average number of citations to articles published in journals, books, thesis, project reports, newspapers, conference/seminar proceedings, documents published in internet, notes, and any other approved documents. In an ideal world, evaluators would read each article and make personal judgments but it is not pragmatic. Clarivate itself receives 10+ million entries each year when calculating JIF so it's highly unlikely that it can be solved by conscientious peer review. Many of the limitations and issues listed above can be fixed with the right bit of tweaking, it however requires effort by both, Clarivate analytics and the general scientific community.